When doing large-scale document processing and you want to be able to connect unstructured data at scale, IBM’s Deep Search is the way to go and neatly integrated with Argilla for curation of your dataset.

Deep Search uses natural language processing to ingest and analyze massive amounts of data—structured and unstructured. Researchers can then extract, explore, and make connections faster than ever.

Start Structuring data

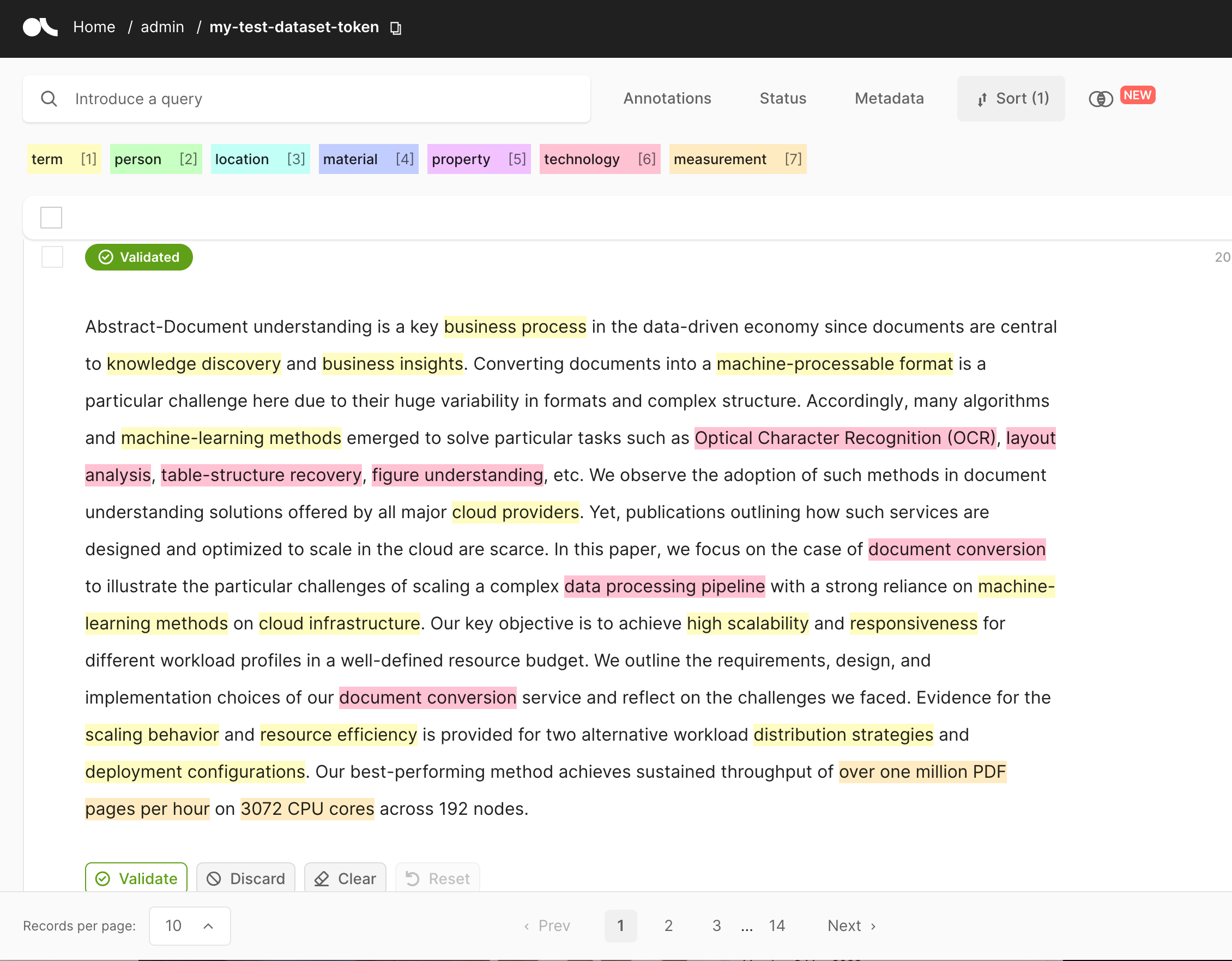

In this example, we will use the output of the Deep Search converted document for populating a dataset inside Argilla. This enables the user to annotate the text segments for multiple purposes, e.g. text classification, named entities recognition, etc as well as train custom models fitting their purposes.

Initializing Deep Search

First, we initialize the Deep Search client from the credentials contained in the file ../../ds-auth.json. This can be generated with !deepsearch login --output ../../ds-auth.json . More details are in the docs.

Initializing Argilla

Even though most of you probably are familiar with setting up Argilla, we provide a short recap. The easiest method to set up your own instance is using the 🤗 Hugging Face Space, as documented on https://huggingface.co/docs/hub/spaces-sdks-docker-argilla or using any other Argilla deployment methods as listed at https://docs.argilla.io/en/latest/getting_started/installation/deployments/deployments.html.

export ARGILLA_API_URL="" # for example https://<USER>-<NAME>.hf.spaceexport ARGILLA_API_KEY="" # for example "admin.apikey"Configuration

In order to show a concise overview of the used parameters and packages for this integration, the code snippets underneath provides an overview of the integrations.

import osfrom pathlib import Path# Deep Search configurationCONFIG_FILE = Path("../../ds-auth.json")PROJ_KEY = "1234567890abcdefghijklmnopqrstvwyz123456"INPUT_FILE = Path("../../data/samples/2206.00785.pdf")# Argilla configurationARGILLA_API_URL = os.environ["ARGILLA_API_URL"] # required env varARGILLA_API_KEY = os.environ["ARGILLA_API_KEY"] # required env varARGILLA_DATASET = "deepsearch-documents"# TokenizationSPACY_MODEL = "en_core_web_sm"Now download the spaCy model, which will be used for tokenizing the text.

!python -m spacy download {SPACY_MODEL}Import the required dependencies.

# Import standard dependenicesimport jsonimport tempfileimport typingfrom zipfile import ZipFile# IPython utilitiesfrom IPython.display import display, Markdown, HTML, display_html# Import the deepsearch-toolkitimport deepsearch as ds# Import specific to the exampleimport argilla as rgimport spacyfrom pydantic import BaseModelAnd lastly, we will define a pydantic model to ensure that we can map Deep Search document sections to an Argilla-friendly structure.

class DocTextSegment(BaseModel): page: int # page number idx: int # index of text segment in the document title: str # title of the document name: str # flavour of text segment type: str # type of text segment text: str # content of the text segment text_classification: typing.Any = ( None # this could be used to store predictions of text classification ) token_classification: typing.Any = ( None # this could be used to store predictions of token classification )Document conversion with Deep Search

To use Deep Search for converting documents, you send a pass a source_path containing one or multiple files, to their document conversion API. This will be done on the server side, ensuring the use of their powerful and quick model eco-system, which converts your unstructured documents to nicely structured texts (headers and paragraphs), tables, and images by parsing everything in the documents like text, bitmap images and line paths.

# Initialize the Deep Search client from the config fileconfig = ds.DeepSearchConfig.parse_file(CONFIG_FILE)client = ds.CpsApiClient(config)api = ds.CpsApi(client)# Launch the docucment conversion and download the resultsdocuments = ds.convert_documents( api=api, proj_key=PROJ_KEY, source_path=INPUT_FILE, progress_bar=True)Once the documents have been processed, we can download the documents from their servers. For this example, we will only use the .json files, which contain the text data for Argilla, so we are not considering the bounding box detection, which is also provided by Deep Search.

output_dir = tempfile.mkdtemp()documents.download_all(result_dir=output_dir, progress_bar=True)converted_docs = {}for output_file in Path(output_dir).rglob("json*.zip"): with ZipFile(output_file) as archive: all_files = archive.namelist() for name in all_files: if not name.endswith(".json"): continue doc_jsondata = json.loads(archive.read(name)) converted_docs[f"{output_file}//{name}"] = doc_jsondataprint(f"{len(converted_docs)} documents have been loaded after conversion.")Extract text segments

We can now use the converted_docs JSON-files, to map our data into our previously defined DocTextSegment data model. Note that, you normally might want to add some pre-processing heuristics to assess the relevance of each one of the segments before uploading. Similarly, this might be used to pre-filter some information before logging it to Argilla. In most scenarios, a simple classifier or even a basic keyword matcher will already do the trick.

text_segments = []for doc in converted_docs.values(): doc_title = doc.get("description").get("title") for idx, text_segment in enumerate(doc["main-text"]): # filter only components with text if "text" not in text_segment: continue # append to the component to the list of segments text_segments.append( DocTextSegment( title=doc_title, page=text_segment.get("prov", [{}])[0].get("page"), idx=idx, name=text_segment.get("name"), type=text_segment.get("type"), text=text_segment.get("text"), ) )print(f"{len(text_segments)} text segments got extracted from the document")Log text segments to Argilla

We can now log the extracted segments into Argilla. First, we will use the entire segments and paragraph to create a record for TokenClassification

# Initialize the Argilla SDKrg.init(api_url=ARGILLA_API_URL, api_key=ARGILLA_API_KEY)# Initialize the spaCy NLP model for the tokenization of the textnlp = spacy.load("en_core_web_sm")First, log some Text Classification Records

# Prepare text segments for text classificationrecords_text_classificaiton = []for segment in text_segments: records_text_classificaiton.append( rg.TextClassificationRecord( text=segment.text, vectors={}, prediction=segment.text_classification, metadata=segment.dict( exclude={"text", "text_classification", "token_classification"} ), ) )# Submit text for classificationrg.log(records_text_classificaiton, name=f"{ARGILLA_DATASET}-text")Second, log some Token Classification Records

# Prepare text segments for token classificationrecords_token_classificaiton = []for segment in text_segments: records_token_classificaiton.append( rg.TokenClassificationRecord( text=segment.text, tokens=[token.text for token in nlp(segment.text)], prediction=segment.token_classification, vectors={}, metadata=segment.dict( exclude={"text", "text_classification", "token_classification"} ), ) )rg.log(records_token_classificaiton, name=f"{ARGILLA_DATASET}-token")

What’s next?

Now that the documents are converted and uploaded in Argilla, you can use the uploaded records to annotate and train your own models to include in the Deep Search eco-system.

Visit the Deep Search Website or GitHub repository to learn about their features and check out their awesome publications and examples.