We are thrilled to introduce Argilla on Hugging Face Spaces. You can now deploy Argilla on the Hugging Face Hub with a few clicks. If you have a Hugging Face account, just click on the one-click deployment button below:

At Argilla, our goal is to provide NLP practitioners with the most powerful tool for iterating on NLP data. That's why we're thrilled to collaborate with Hugging Face and bring data labeling and Argilla's data-centric approach to an audience of thousands of practitioners.

How it works

To get started with Argilla, you should deploy its server. You have the choice of deploying locally or through cloud providers. With Argilla on Hugging Face Spaces, you can launch your own Argilla Server quickly and without any cost, without the need for any local setup. Simply launch in a matter of minutes:

- Deploy on HF Spaces. If you plan to use the Space frequently or handle large datasets for data labeling and feedback collection, upgrading the hardware with a more powerful CPU and increased RAM can enhance performance.

- Optionally, setup your user credentials and API keys. Default user and password are: owner and

12345678. - Copy the direct URL to use with the argilla library for reading and writing data.

- Open your favorite Python editor and start building amazing datasets! Keep reading to see some example use cases

For more details, check out the step-by-step guide on our Docs. Now, let's explore some exciting use cases and applications.

Label a dataset and build a custom classifier with SetFit

The core application of Argilla is to efficiently label your datasets. This process can be further streamlined using pre-trained models and few-shot libraries like SetFit. You can learn how to label a dataset and train a SetFit model by following the step-by-step tutorial on Argilla docs. The tutorial can be run on Colab or Jupyter Notebooks. Here's most of the code you'll need:

Create dataset for data labeling

import argilla as rgfrom datasets import load_dataset# You can find your Space URL behind the Embed this space buttonrg.init( api_url="<https://your-direct-space-url.hf.space>", api_key="team.apikey")banking_ds = load_dataset("argilla/banking_sentiment_setfit", split="train")# Argilla expects labels in the annotation column# We include labels for demo purposesbanking_ds = banking_ds.rename_column("label", "annotation")# Build argilla dataset from datasetsargilla_ds = rg.read_datasets(banking_ds, task="TextClassification")# Create datasetrg.log(argilla_ds, "bankingapp_sentiment")After this step, you can now start using the Argilla UI to label your data.

Load dataset and train SetFit model

Once you have labelled a few examples, you can run the code below to train a SetFit model:

labelled_ds = rg.load("banking_sentiment").prepare_for_training()labelled_ds = labelled_ds.train_test_split()model = SetFitModel.from_pretrained("sentence-transformers/paraphrase-mpnet-base-v2")# Create trainertrainer = SetFitTrainer( model=model, train_dataset=labelled_ds["train"], eval_dataset=labelled_ds["test"], loss_class=CosineSimilarityLoss, batch_size=8, num_iterations=20,)trainer.train()metrics = trainer.evaluate()Build a feedback loop for Flan-T5 with Gradio

Another application of Argilla is data and feedback collection from third-party apps. We designed Argilla to be seamlessly integrated into existing tools and workflows. If you want to build datasets from custom apps or services, you can now easily connect Argilla with Gradio, Streamlit, or Inference endpoints.

In this example, we connect a Gradio Space with an Argilla to collect Flan-T5 inputs and predictions. This data can be used for gathering human feedback with Argilla to fine-tune Flan-T5 for your use case or build a RLHF workflow with TRL. Here’s all you need to add to your app.py:

import argilla as rgclass ArgillaLogger(FlaggingCallback): def __init__(self, api_url, api_key): rg.init(api_url=api_url, api_key=api_key) def setup(self, components: List[IOComponent], flagging_dir: str): pass def flag( self, flag_data: List[Any], flag_option: Optional[str] = None, flag_index: Optional[int] = None, username: Optional[str] = None, ) -> int: text = flag_data[0] inference = flag_data[1] # build and add record to argilla dataset rg.TextClassificationRecord(inputs={"answer": text, "response": inference}) rg.log( name="i-like-tune-flan", records=record, )io = gr.Interface( allow_flagging="manual", flagging_callback=ArgillaLogger( api_url="https://dvilasuero-argilla-template-space.hf.space", api_key="team.apikey", ), # other params)You can interact with the resulting Gradio app below:

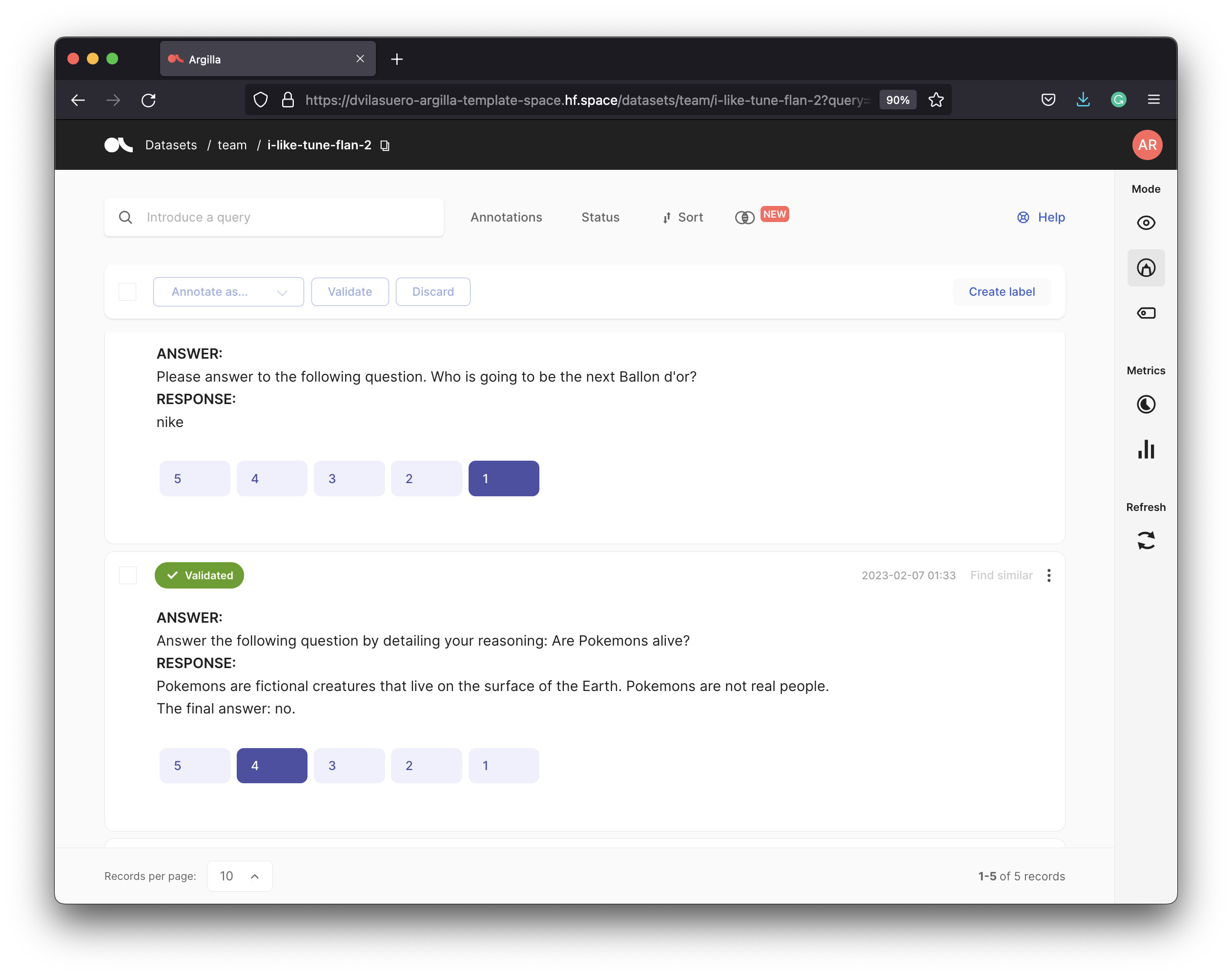

Whenever a user submits text and pushes the Flag button, both the input and the generated response are recorded in Argilla. With Argilla, you can evaluate the responses generated by Flan-T5 as shown below:

This demo Space is a modification of the amazing Space by Omar Sanseviero but you can build your own Argilla-powered Gradio app with almost any NLP model on the Hub. Hint: use the Deploy button on a model Hub's page, launch the Space, and add the ArgillaLogger block.

Note that you could use Gradio's flag options to directly collect feedback from users and record this feedback on Argilla. If you are interested in this use case, connect with us on the Argilla's discord.

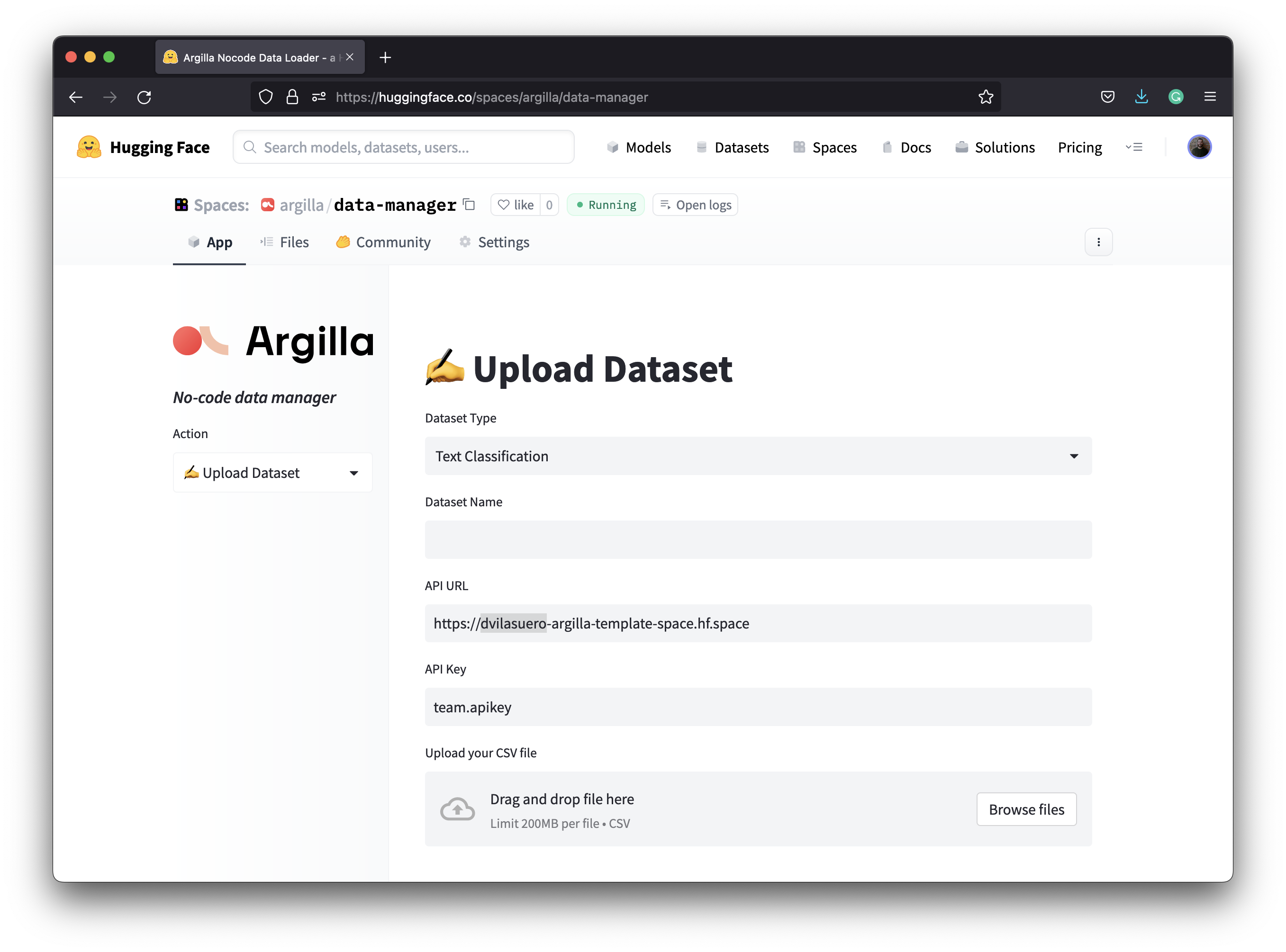

Use Streamlit to upload and download Argilla datasets

Not in the mood for coding? Check out this simple Streamlit app. It allows you to create Argilla datasets from CSV files and download annotated datasets in either CSV or JSON format.

Embed Argilla on Jupyter Notebooks or Colab

Do you want to label data directly from a notebook? Just use the code snippet below and start labeling:

%%html<iframe src="https://your-argilla-space.hf.space" frameborder="0" width=100% height="700"></iframe>Run Argilla Tutorials on Colab or Noteboks

Finally, you can runn all Argilla tutorials with our new Open in Colab and view source buttons. These tutorials are organized by ML lifecycle stage, libraries, techniques, and NLP tasks. We hope you find one that fits your needs!

Next steps

The potential applications of combining Argilla Spaces with other tools and services are limitless. As we mentioned when we launched Argilla, we aim to involve more humans in the AI development process, and we look forward to seeing what you create with Argilla on Spaces. If you want to share feedback, showcase your creations, or discuss future plans, join our discord!